HiDPI on macOS and Ubuntu

April 12, 2023•821 words

It's been a good while since "HD" was acceptable for computer monitors.

Thing is, operating system people seem to have not really caught up to this idea. Not even Apple, whose "Retina" branding for HiDPI monitors is dangerously close to becoming generic like Kleenex is for facial tissues. (Who calls them "facial tissues", anyway?)

I've also been running Ubuntu Desktop for some things lately, and the situation isn't great there either—but here, I'm also not surprised in the slightest. After all, it's Linux, and if there's one thing I know about Linux, it's that the idea of it "just working" is the pipiest pipe dream ever piped.

Let's go over them, one at a time.

HiDPI in macOS

HiDPI in macOS actually works pretty well, for the most part. If you have an Apple display, or if you have a monitor that checks that magical "4K" checkbox. macOS will say "a-ha! this must be HiDPI!" and give you the good scaling.

Ok. But I have this lovely little… uh… "WQXGA" (would have loved to be in the room when that term was invented) 2560×1600 portable display. It's really convenient; it comes with a single USB-C cable that plugs into the side of my MacBook, it's got lovely color.

macOS takes one look at it and says "welp, let's make everything ludicrously large on this display, eliminating any chance that an even slightly-tall application can fit on it."

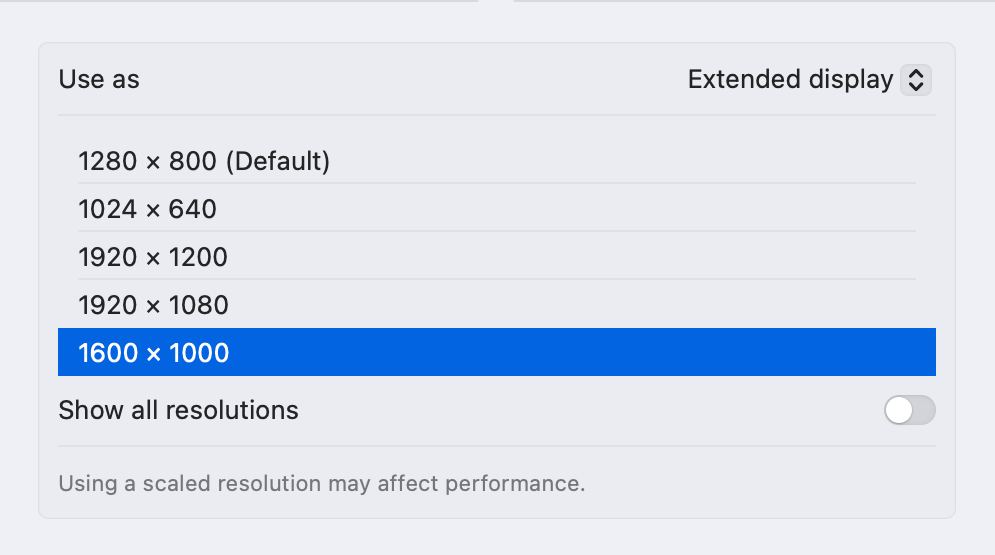

So I try to tell it not to treat it like a 1280×800 equivalent display, but rather something like 1600×1000. We're long past the days of needing integer scale factors, macOS should be able to handle this.

And, no. It looks terrible.

Thankfully, I've got BetterDisplay.

BetterDisplay was once called BetterDummy, which… to be completely honest, I still don't 100% get the "dummy display" thing, although I did use it on my headless Mac mini to tell it that it should pretend to have a HiDPI display instead of pretending to have a muddy 1080p one when I Screen Share into it.

The main thing I do with it is find the displays macOS doesn't think are HiDPI, and convince it that they actually are. That works like this:

- Open BetterDisplay's preferences.

- Under "Displays", find the monitor that macOS doesn't believe is HiDPI, and set "Edit the system configuration of this display". Apply as necessary.

- Find the offending display in the BetterDisplay menu.

- Under "Set Resolution", swap the ugly LoDPI for something beautiful from the HiDPI set.

That's it. And now it scales beautifully, as macOS is suddenly convinced that it's actually a good monitor after all.

HiDPI in Ubuntu

I have a completely different problem with Ubuntu. First, I'm not running it on bare metal; I'm running it in VMware Fusion on my MacBook.

Out of the box, VMware acts like an ancient LoDPI monitor. Whatever display size you set, it'll tell the virtual machine you've got 25% that many pixels and then muddy it all up for you scaling those pixels back up again.

There's a switch that'll change this behavior for you. Under the VM settings, Display, turn on "Use full resolution for Retina display".

And… everything's tiny. Okay, but no problem, this is not unheard-of. Take a trip into Ubuntu's Settings app, Displays, and scale up 200%. Great! This is exactly what we want.

Until you reboot.

What we need to do is make the setting stick. As I tend to do with all things Linux, I started rooting around random forums to find something someone did that maybe kind of worked and then set about improving it.

I found this idea that involves telling people to use Sudo without really explaining what's going on, which is a huge red flag in my book. But, ok, there's something about it that works. Let's see what that is. I'm going to try to get something similar up and running without Sudo.

First hit explains why the whole glib-compile-schemas thing is a bad idea. In my many years of Linuxing from days past, this rings entirely true. This is a gross hack that isn't designed to survive upgrades in any way, and if there's one thing I want to be in my system adventures, it's as clean as I can be.

A little more digging, and I find that dconf is involved, but I'm still also firmly in system administrator land. Can I, as a user, just set and forget this?

Turns out, yes! And it's really simple:

gsettings set org.gnome.desktop.interface scaling-factor 2

I don't quite get what the difference is between this and the Scale setting in Settings—but it is persistent, unlike the former. And now, my Ubuntu display is permanently readable as well.